// EdTech / Career Counselling

GenAI for psychometric assessments that scale beyond the counsellor's calendar

From static questionnaires to a generative assessment engine

// Outcome

Generative scoring narratives delivered during the counselling session instead of days later

// Challenge

The problem, in plain language.

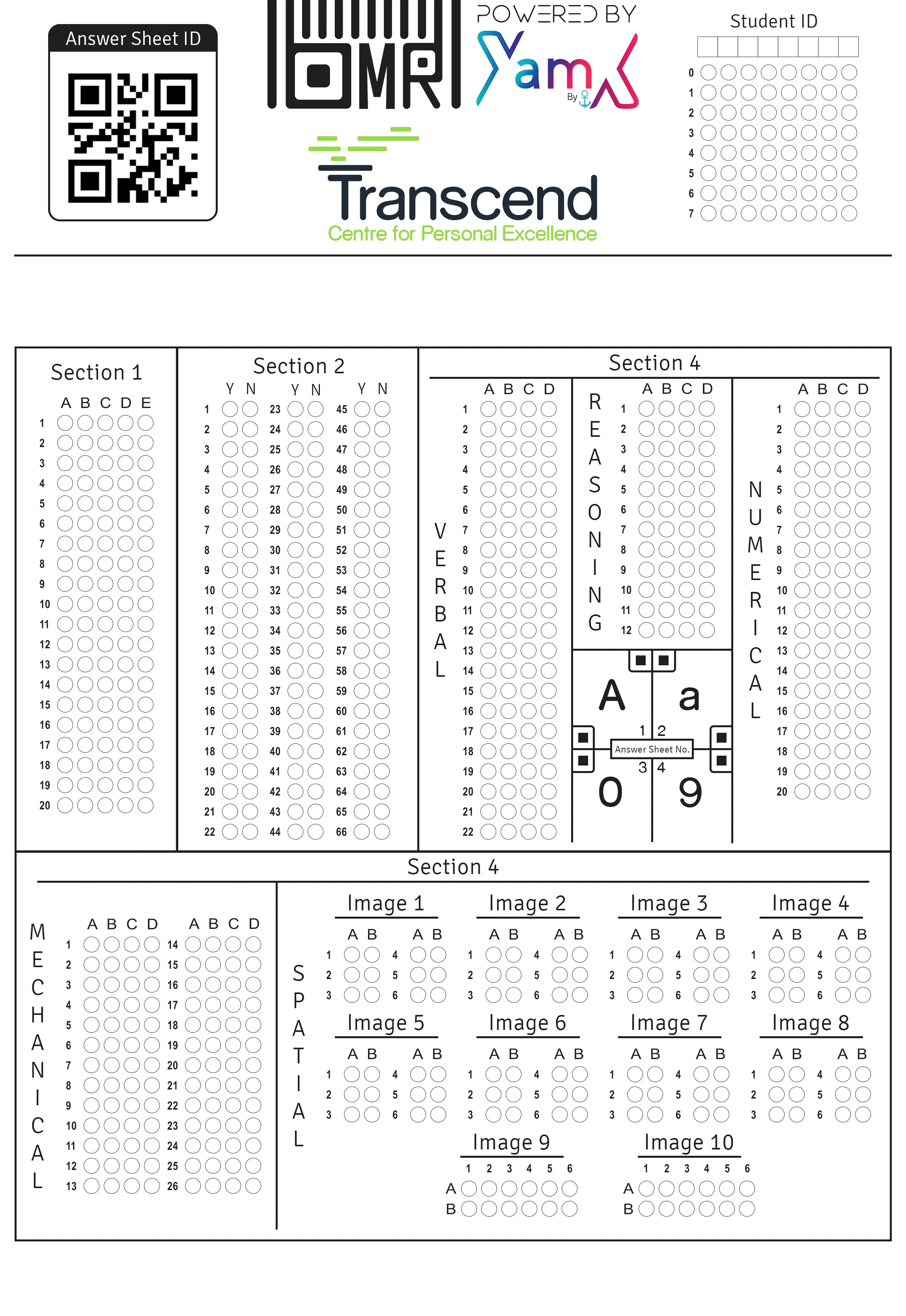

Transcend's career-suitability toolkit leaned on counsellor-led interpretation for every report, which capped how many students the team could serve in a given week. They wanted to keep the psychometric rigour of their instrument while using generative AI to expand the item bank, tailor scoring narratives, and turn a multi-day report turnaround into a same-session experience.

Approach

We started from the existing psychometric instrument, not the model. The counselling team walked us through how they interpret trait clusters, what “good” looks like in a written report, and where a generic LLM answer would feel off-brand or clinically wrong. From that we defined three separate GenAI surfaces — item generation, adaptive scoring commentary, and long-form report drafting — each with its own evaluation rubric, so we could harden one pipeline at a time without touching the validated scoring model underneath.

Solution

We shipped a Next.js assessment portal backed by a FastAPI scoring service. A private item bank feeds a retrieval layer that grounds every generated question in the original construct definitions, and a constrained prompt pipeline drafts counsellor-ready narratives in the practice’s own voice. Counsellors review and edit drafts in an inline editor before a report is finalised, and every approved edit flows back as a preference signal that tunes the next generation. Integrations with the existing proctoring layer keep high-stakes sessions honest without changing the candidate UX.

What changed

Counsellors now walk out of a session with a finished report in hand instead of a backlog to write up that evening. The generative item bank gives the practice room to refresh tests by cohort without commissioning a new instrument every cycle, and the scoring commentary reads like the team wrote it — because, in effect, they did. The measured shift shows up in the outcome metric above.

// Gallery

Inside the build.

// Client voice

“The platform let our counsellors spend their time on conversations, not report writing. The AI drafts stay close to our framework and our tone — we still sign off on every narrative, but we're no longer the bottleneck.”

Programme Lead, career-counselling practice

// Related services

How we could do this for you.

Generative AI Consulting

Generative AI, applied with taste and judgment.

Opinionated generative AI use-case selection, build vs. buy, and workflows that preserve quality.

See serviceAI Product Engineering

AI features that ship like real products.

Design, backend, frontend, and ML — one coordinated team from prototype to production.

See service// Ready to ship?

Ready to ship something like this?

Short call. No deck. We will tell you honestly whether we are the right team for your problem.